GIH Blog and News

Healthier Information Ecosystems: Strategies for Health Philanthropy

Our information environment is transforming—including the places and people who help us make decisions about our health. Those health information ecosystems are fragmented; filled with information from a wide range of expertise and sources; and platform algorithms exert tremendous and unseen control over what messages are seen, shared, and amplified. These changes have many of our traditional health information sources racing to learn new skills to ensure they remain trusted and relevant.

Beyond Innovation: How Philanthropy Can Strengthen Systems to Improve Rural Health Outcomes

Sometimes innovation in philanthropy is associated with breakthrough technologies or new medical discoveries. But some of the most impactful investments fund something less visible: the coordination of people, protocols, and institutions already in place so they work together seamlessly to save lives.

GIH Bulletin

GIH Bulletin: April 2026

Sometimes innovation in philanthropy is associated with breakthrough technologies or new medical discoveries. But some of the most impactful investments fund something less visible: the coordination of people, protocols, and institutions already in place so they work together seamlessly to save lives.

Read More →GIH Bulletin: March 2026

Grantmakers In Health’s Maya Schane spoke with Stephanie Teleki of The California Health Care Foundation, Laila Bell of The Skillman Foundation, and Jaime Vazquez of The Pew Charitable Trusts about their recently published article in The Foundation Review, “When Shift Happens: Navigating Toward a Framework for Responsible Philanthropic Exits.”

Read More →Reports and Surveys

2025 Year in Review

The 2025 Grantmakers In Health (GIH) Year in Review report explores GIH’s strategic pivot in response to devastating federal health policy changes, provides an update on the ongoing implementation of GIH’s strategic plan, and previews GIH’s priorities for 2026.

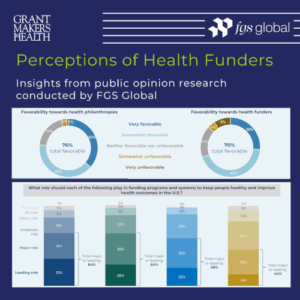

Survey Findings: Perceptions of Health Funders

In July and August 2025, Grantmakers In Health (GIH) conducted research to help health funders better understand how they are viewed by the public. The research included an online survey of engaged voters nationwide and an online focus group with Washington, DC, policy professionals.

An overview of the survey and online focus group findings is now available to all GIH Funding Partners. In addition, this overview was presented on Thursday, November 20, 2025, at the 2025 GIH Health Policy Exchange in Arlington, VA.

GIH Health Policy Update Newsletter

The Latest

An Exclusive Resource for Funding Partners

The Health Policy Update is a newsletter produced in collaboration with Leavitt Partners and Trust for America’s Health. Drawing on GIH’s policy priorities outlined in our policy agenda and our strategic objective of increasing our policy and advocacy presence, the Health Policy Update provides GIH Funding Partners with a range of federal health policy news.